OFFICE OF ADVANCED SIMULATION AND COMPUTING AND INSTITUTIONAL R&D PROGRAMS (NA-114)

Quarterly Highlights | Volume 5, Issue 3 | July 2022

In This Issue

Dedication of LLNL's ECFM project

NNSA and Cornelis Networks to collaborate on next-generation networking

LANL’s electrostatic discharge simulations become predictive with improved data

KCNSC’s high-fidelity model for the direct ink write printing process

LANL demonstrated a reactive burn model for simulations of HE detonation

SNL modeling improves predictions of turbulent pressure loadings on hypersonic re-entry vehicles

LANL/LLNL develop multiphase modeling framework validated against tin experiment

SNL’s semiconductor models support tunneling device development for future systems

LANL simulates Deflagration-to-Detonation Transitions, a grand-challenge in HE safety

SNL’s W80-4 Structural Dynamics analysts achieve 10-20x simulation speed-ups

SNL LDRD-funded seashell-inspired shield protects materials in hostile environments

LDRD supports tailor-made carbon nanomaterials at LLNL

LDRD Grand Challenge Project could transform electronics and solve energy challenges

ASC & LDRD Community—Upcoming Events (at time of publication)

- DOE AI for Science, Energy, and Security (AI4SES) Workshop at UC Davis; July 26-28*

- Joint LLNL/LANL Predictive Science Panel Meeting at LANL; August 9-11

- DOE AI4SES Workshop at Morgan State University; August 16-18*

- CORAL-2 Quarterly Review at ORNL; August 29-30

- Trilab CSSE/FOUS Planning Meeting at LLNL; September 13-14

- Production Simulation Initiative (PSI) Workshop at SNL-NM; September 21-22

- PSAAP III Annual Reviews at Multi-disciplinary Simulation Centers (MSCs), Single-Discipline Centers (SDCs), and Focused Investigatory Centers (FICs), late September – early November

- Stewardship Capability Delivery Schedule Summit at Y-12; October 12-13

- Supercomputing 2022 in Dallas, TX; November 13–18

- CORAL-2 quarterly review at LLNL; December 6-8

*Invitation only

Questions? Comments? Contact Us.

Welcome to the third 2022 issue of the NA-114 newsletter - published quarterly to socialize the impactful work being performed by the NNSA laboratories and our other partners. This issue begins with a highlight from Lawrence Livermore National Laboratory (LLNL) where the NNSA Administrator led the dedication of the Exascale Computing Facility Modernization (ECFM) in a ribbon-cutting ceremony marking the completion of this critical infrastructure project supporting exascale computing for the Stockpile Stewardship Program. After a two-year delay due to the COVID-19 pandemic, this quarter also saw the long-awaited return of the in-person ASC Principal Investigators (PI) meeting in Monterey, CA and at LLNL, carefully organized by Sandia National Laboratories (SNL) – the ASC community “group shot” is shown in the banner above. Other highlights include:

- A new $18 million contract for the NNSA labs and Cornelis Networks to collaborate on next-generation networking technologies.

- Los Alamos National Laboratory’s (LANL’s) data accuracy improvements increase the fidelity of electrostatic discharge simulations that could help inform safety margins for nuclear weapon dismantlement at Pantex.

- Kansas City National Security Campus’ (KCNSC’s) new high-fidelity modeling capability for the direct ink write (DIW) printing process, enabling process engineers to positively impact production.

- LANL’s demonstrated predictive reactive burn model supporting simulations for high explosive detonation studies.

Please join me in thanking the professionals who delivered the achievements highlighted in this newsletter and on an ongoing basis, all in support of our national security mission.

Thuc Hoang

NA-114 Office Director

NNSA Administrator Hruby dedicates LLNL’s Exascale Computing Facility Modernization project, aiding future certification and design efforts for Stockpile Stewardship.

On June 15th, NNSA Administrator, Jill Hruby, dedicated the Exascale Computing Facility Modernization (ECFM) project at LLNL. The ribbon-cutting ceremony marked the completion of this critical infrastructure project, which finished ahead of schedule and under budget, despite the COVID-19 pandemic.

The $100 million ECFM — LLNL’s first line-item project in more than a decade — is a significant boost to the power and water-cooling capacity of the Livermore Computing Center, preparing it to house exascale-class supercomputers that can perform more than one quintillion calculations per second, and will serve the high-performance computing (HPC) needs of the stockpile stewardship mission of NNSA.

With past and present leaders from NNSA’s and LLNL’s ASC programs looking on, Hruby congratulated the design, planning, and construction teams on completing the project, which will allow NNSA to field two exascale-class supercomputers simultaneously, including its first system named El Capitan, due to arrive at LLNL in 2023.

The next-generation machines will allow NNSA and the ASC program to meet the certification requirements of the Stockpile Stewardship Program and aid in future design efforts, Hruby said. “This kind of planning and execution in the modernization of our computing facilities enables us to move into the exascale era, and not only site El Capitan, but bring a second exascale-class system online in this next decade without having to turn off El Capitan first,” Hruby said. “Given the dependence of the NNSA mission on HPC, ECFM is a visible sign to the rest of the world of NNSA’s capability and intent to retain global leadership in HPC, which in turn contributes to our nation’s deterrent.”

LLNL’s ECFM project was completed nine months ahead of schedule and $9M under budget.

Hruby and other speakers acknowledged ECFM Project Manager, Anna Maria Bailey, and the teams involved in the endeavor from NNSA, the Livermore Field Office (LFO) and engineering and construction crews, crediting them for their ability to deliver on the project nine months ahead of schedule and $9M under budget, pandemic-related delays notwithstanding.

More than 15 years in planning and development, the ECFM project required an extensive permitting process, coordination with local utility companies and the contributions of hundreds of people. In an area adjacent to Building 453 — the LLNL’s main computing facility — construction crews installed a 115kV transmission line, air switches, substation transformers, a switchgear, relay control enclosures, 13.8 kilovolt secondary feeders, and cooling towers.

Declared complete by NNSA on June 8th, the ECFM infrastructure can supply the facility with 85 megawatts of electricity, up from the previous 45-megawatt capacity and enough to power a medium-sized city. It also expands the facility’s water-cooling system from 10,000 tons to 28,000 tons.

Lab Director Kim Budil called the ribbon-cutting a “fitting moment” for the Lab, marking the next step in its legacy of leadership in supercomputing and ushering in an era that will foster external collaboration, bring talent to the Lab and generate new scientific discoveries across numerous disciplines, including climate science, design of therapeutics and vaccines, artificial intelligence, and advanced manufacturing.

LLNL ASC Program Director Rob Neely, who emceed the event, recognized teams from NNSA, LLNL, LFO, the Western Area Power Administration, engineering/construction firms Burns & McDonnell and Nova Probst, and others for their work and perseverance in overcoming the challenges of COVID-19, wildfires, and supply chain disruptions. LFO Manager Pete Rodrik and LLNL Weapons and Complex Integration Principal Associate Director Brad Wallin also spoke at the event. Wallin commented on the significance of supercomputing and ECFM to the weapons program, saying that the upgrade will allow the Lab to pursue “new computing paradigms unconstrained by our infrastructure.” “ECFM is the bedrock to the computing foundation on which the future stockpile will be designed and assessed, and that will help the transformation of the nuclear security enterprise,” Wallin said.

After the program concluded, Hruby, Budil and Rodrik, alongside NNSA and LLNL ASC leadership and representatives from the design, engineering, and construction teams, performed the ceremonial ribbon cutting. (See the LLNL press release for more information)

NNSA and Cornelis Networks to collaborate on next-generation high performance networking.

On May 4th, NNSA announced the award of an $18 million contract to Cornelis Networks for collaborative research and development in next-generation networking for supercomputing systems at the NNSA laboratories.

The Next-Generation High Performance Computing Network (NG-HPCN) project for the NNSA’s ASC program will enable NNSA to co-design and partner with Cornelis on development and productization of next-generation interconnect technologies for HPC. The project is led by LLNL for the NNSA Trilabs: LLNL, LANL, and SNL. The resulting networking technologies are expected to support mission-critical applications for HPC, artificial intelligence (AI), machine learning (ML), and high-performance data analytics. Applications could include stockpile stewardship, fusion research, advanced manufacturing, climate research, and other open science on future ASC HPC systems.

“We are pleased to be partnering with Cornelis Networks to co-develop advanced networking technologies which we anticipate will be well suited for the needs of the ASC program in the coming years,” said NNSA ASC Program Director Thuc Hoang. “As we move into exascale supercomputing and beyond, and increasingly rely on emerging technologies to achieve our mission objectives, we will need creative high-performance solutions to meet future challenges.”

The contract is part of the ASC post-Exascale-Computing-Initiative (ECI) investment portfolio, whose objective is to sustain the technology research and development momentum and strong engagement with industry that the DOE ECI had initiated via its PathForward program. It also will help foster a more robust domestic HPC ecosystem by increasing U.S. industry competitiveness in next-generation interconnect technologies.

“We expect this contract will have an impact on future ASC Advanced Technology and Commodity Technology Systems at the NNSA labs and will allow us to make key improvements in ASC application network performance,” said Matt Leininger, Senior Principal HPC Strategist at LLNL. “The NG-HPCN collaboration with Cornelis will enable the co-design of next-generation networks targeted towards NNSA’s scientific and engineering workloads.”

Representatives from Cornelis Networks said the funding will be used to collaborate with the NNSA laboratories to accelerate development of network simulation capabilities and highly performant adapter and switching technologies — including advanced features and in-network intelligence — and to enable open-source host software based on OpenFabrics Interfaces.

(For more information, see the LLNL press release)

More accurate atomic and molecular data from LANL’s models allow electrostatic discharge simulations to become predictive.

Such simulations will be used to underwrite more realistic safety procedures for nuclear weapon dismantlement at Pantex.

Electrostatic discharges (ESDs), or “sparks” are a safety concern in the nuclear explosives operations (NEOPS) at Pantex. Some ESD safety guidelines have been based on non-physical assumptions and have led to procedures that are costly, slow productivity, and in some cases, have led to plant shutdowns. This has motivated LANL to pursue the development of a high-confidence, predictive capability that would lead to a better understanding of the physical conditions related to ESD. Underwriting guidelines, however, require high-fidelity predictions of ESD events. ESD is dominated by non-linear dynamics, non-equilibrium physics, and involves multi-scale cooperative behavior.

In FY21, the ASC PEM atomic project joined forces with LANL’s weapons system safety group in developing tools for a physics-based reevaluation of the ESD guidelines. A well-recognized challenge to predictive air-gap ESD simulations stems from inaccurately capturing the physics of scattering. Ad-hoc remedies in which cross-sections are calibrated to reproduce the measured values of specific transport coefficients are not guaranteed to capture other transport characteristics, or to accurately describe ESD dynamics. For instance, in an electric field, the high-energy-tail of the electron-energy distribution (describing the electrons that can initiate the cascade of an electric break-down event) is sensitive to the angular distribution of electrons scattered elastically by the atoms and/or molecules of the atmosphere.

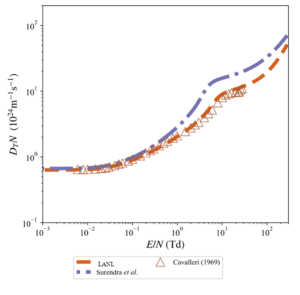

A recent breakthrough where LANL has been able to calculate and implement an accurate (adjustment-free) cross-section for a Helium plasma shows promise of a breakthrough with respect to modeling elastic scattering (shown in Figure 3). The room temperature transverse diffusion coefficient calculated with the new LANL model was assessed against high precision measurements (shown by the triangle symbols) and to the (adjustment-free) implementation of the previous gold standard model (Surendra). The new LANL model calculations compare well with measurements across a wide range of the reduced electric field values without the need of ad-hoc adjustments or time-consuming calculations which represents an important step forward in the development of a new capability for understanding electric discharge in NEOPS. (LA-UR-22-24114)

KCNSC developed a high-fidelity model for the direct ink write printing process, predicting pressure buildup and bead quality to increase efficiency and reduce physical testing to dial-in the production process.

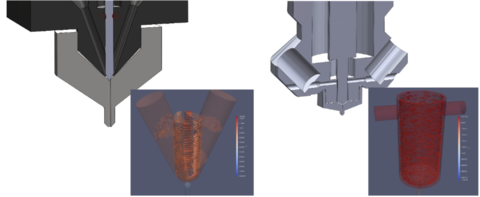

KCNSC has developed a high-fidelity model to predict the flow behavior of siloxane inks as they pass through a mixing unit for the purpose of optimizing mixing efficiency and extrusion parameters during the direct ink write (DIW) printing process. In-house materials testing capabilities were utilized to develop the material model and validate simulation parameters. Results from the model assisted with down-selecting the mixing nozzle designs (Figure 4) and optimizing production parameters, such as machine revolutions per minute (RPM), which reduces the number of pad prototypes that need to be produced. Simulation results were substantiated by subsequent observations of the physical printing process. Additionally, this capability offers critical information for the programming of involved DIW machinery, and initial simulation efforts have enabled the process engineers at the KCNSC to positively affect production. (NSC-614-4444 UUR)

LANL ASC developers have demonstrated a more predictive reactive burn model for challenging numerical simulations of high explosive detonation initiation and propagation.

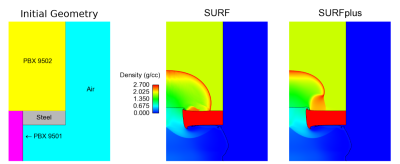

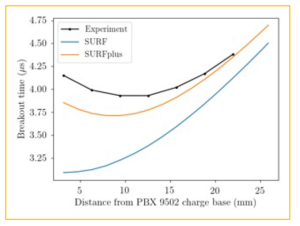

Reactive burn models have become vital tools for predicting the initiation, build-up, and propagation of detonation in high explosives (HE). These models allow insight into detonation phenomena not captured with traditional programmed burn models, such as dependence on charge geometry and confinement. Of particular interest are insensitive triaminotrinitrobenzene (TATB)-based explosives which have slower reactions resulting in longer reaction zones and detonation performance that is more sensitive to the initial temperature. To provide this predictive capability, the Scaled Uniform Reactive Front-plus (SURFplus) flow model has been implemented in the Free Lagrange (FLAG) hydrocode with verification and validation via small-scale experiments. The “plus” in this new model refers to the inclusion of carbon clustering in TATB-based PBX 9502 products. These carbon clusters had not been included in the earlier model but are important because they are associated with a separate and slower timescale for burning.

Corner turning, which occurs when a propagating detonation wave transitions from a smaller charge into a larger charge, is a particularly challenging simulation problem. The shock diffraction around a corner can result in a region of partially reacted or unreacted HE, known as a dead zone. In simulations of double-cylinder corner turning experiments performed at LLNL (Figure 5), it was observed that corner turning in the PBX 9502 charge is accurately predicted by the SURFplus model but not the SURF model (with a historical parameter set). More specifically, without the slow reactions, the detonation in the SURF model turns the corner too quickly. (LA-UR-22-25456)

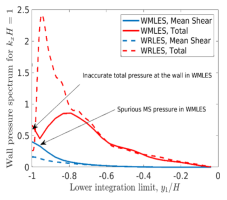

SNL improves predictions of turbulent pressure loadings on hypersonic re-entry vehicles with a 5-10X speedup via a near-wall turbulence modeling strategy using Wall-Modeled Large Eddy Simulation(WMLES).

SNL staff predicted turbulent pressure loadings using large eddy simulation (LES) of plane turbulent channel flow with a modeling approach that could eliminate a major computational cost for delivery system analysis and design. Improvements made to wall-modeled LES (WMLES) provide a mid-fidelity prediction of surface pressure fluctuations on hypersonic re-entry vehicles and other aerospace systems. A channel flow problem served as a surrogate for aerodynamics applications where turbulence near a solid surface generates unsteady pressure loadings that excite the adjacent structure. In this work, an expensive wall-resolved LES (WRLES) model was used as a reference solution, against which a less-expensive WMLES on a coarser mesh was compared. Results show WMLES provides a computational speed-up of a factor of five to ten, relative to WRLES for applications of interest. The WMLES analysis utilized a Green’s function approach to isolate the physical mechanisms and flow regions responsible for surface pressure fluctuations. Researchers found that the surface pressure spectrum at low frequencies, where structural response to pressure loadings is typically most sensitive, is overpredicted, and that the primary reason for the overprediction was a spurious mean-shear pressure source term present near the wall in the WMLES (noted in the figure on the right). Work to improve near-wall mean shear prediction with WMLES is ongoing. (SAND2022-4758 O)

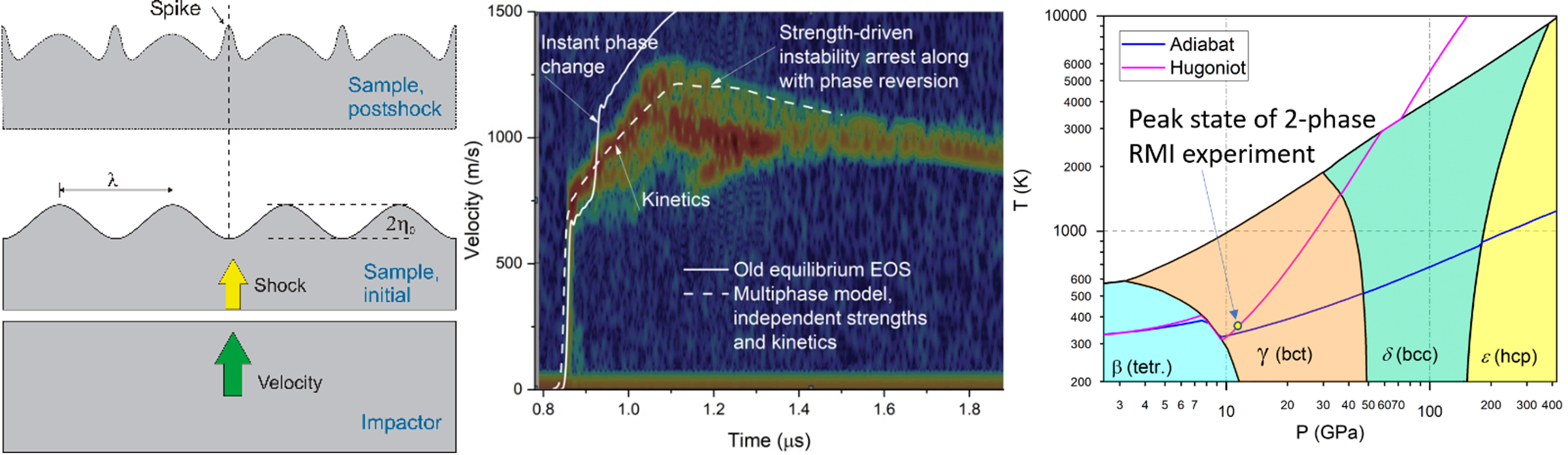

LANL and LLNL collaborated in the development of a multiphase modeling framework, implemented into the ASC multiphysics FLAG code and validated against a LANL experiment on tin.

Key stockpile materials pass through multiple solid phases during typical dynamic loading. While many current equations-of-state do predict those phase changes, they do not capture the coupled interaction between strength and equation of state, and the actual time required for the material to transform. Thanks to extensive collaboration across projects and programs, the ASC program at LANL created an impressive suite of capabilities in order to model strength in a solid that undergoes phase changes and may even exist with multiple phases present simultaneously. This work is part of the Tri-Laboratory Strength Project, with the experimental portion occurring simultaneously through the Dynamic Materials Properties (DMP) program.

A flexible new theory for multiphase and mixed-phase strength was developed in collaboration with LLNL ASC. The theory allows for separate strength models in each phase, but also accommodates high-pressure phases where only limited experimental measurements exist with simpler modeling options. The model also captures changes in the shear modulus with the phase changes, a crucial aspect of modeling strength. This multiphase modeling framework has been implemented into the ASC-developed multiphysics code, FLAG.

A LANL Richtmyer-Meshkov Instability experiment on tin (see Figure 8) provided the first test of the new capability on a multiphase experiment that was sensitive to both strength and kinetics. The improvement in predictive capability of the new multiphase model was striking when compared to the previous capability. Over the next four years as part of the Tri-laboratory Strength Project, ASC will continue to develop this modeling capability, integrate it with advanced experimental capabilities, and build the models for important materials. (LA-UR-22-23179)

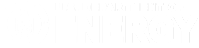

SNL develops band-to-band tunneling semiconductor models in the Charon semiconductor device simulator enabling accurate modeling of tunneling devices for use in future nuclear deterrent systems.

As semiconductor devices keep evolving to smaller dimensions, emerging devices such as tunneling field effect transistors (TFET) based on the band-to-band tunneling effect have been explored to reduce power consumption while obtaining comparable driving current. These devices have a great potential to be used in future nuclear deterrent systems due to their low operation voltage and low power consumption. Therefore, it is important to be able to accurately model band-to-band tunneling and predict electrical responses of these tunneling devices. The Charon team at SNL implemented and verified a band-to-band tunneling (B2BT) modeling capability that is essential to understand the electrical behavior in tunneling devices. An example of applying the B2BT model to an Esaki tunneling diode is shown in the figure below. The B2BT modeling capability allows Charon users to model TFET and other tunneling devices to understand device responses and improve device designs for future nuclear deterrence applications. (SAND2022-5432 O)

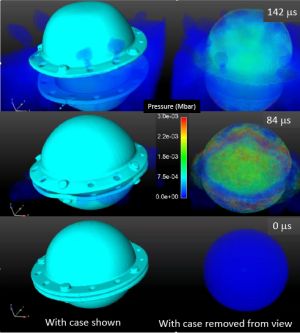

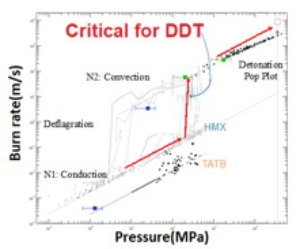

LANL scientists successfully simulate deflagration-to-detonation transitions, a grand-challenge in high explosive safety.

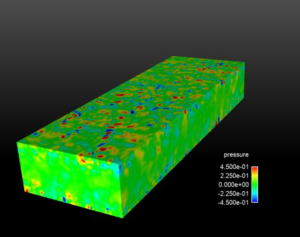

Efficient handling of high explosives (HE) requires predictive capability to understand the magnitude of reaction violence. Unlike prompt detonation scenarios, the physics of deflagration-to-detonation transitions (DDT) are only beginning to be modeled in ASC production codes. Using the LANL-developed Mechanically Activated Thermal CHemistry (MATCH) ignition-to-deflagration model, in conjunction with the Scaled Uniform Reactive Front (SURF) detonation model, LANL scientists have successfully simulated DDT, which is a grand challenge of utmost importance to safety considerations for conventional HE. To achieve a DDT event, explosive confinement is known to be a major factor; MATCH with SURF is being used to study the effects of confinement as shown in Figure 10 (left images of HE in a spherical steel case).

The Center-Ignited Spherical Mass Explosion (CISME)* experimental series was conducted at LANL to understand the impact of charge size and confinement on DDT. The explosive is enclosed in a spherical steel case in the CISME experiment, and by varying the number of steel clamping bolts the level of confinement of the ignited HE is altered, which in turn dictates the strength of the high explosive violent reaction (HEVR) fireball. For example, four bolts shown in the simulation in Figure 10 (left images, in light blue) would correspond to a weak confinement limit.

Burn rate is a metric of violence in HE reaction and has a strong dependence on confining pressure as there is positive feedback between the two: a high burn rate generates high pressure and a high pressure in turn enhances the burn rate. Moreover, experiments show that there is a jump in burn rate above a critical pressure pc, as shown in Figure 11. This jump is due to a transition from conductive to rapid convective burn: at sufficiently high pressure, burning explosive gas is forced into open cracks in the explosive, thus accelerating the burn rate. MATCH uses accumulated damage to incorporate this mechanism and thus achieve agreement with the CISME experiments. (LA-UR-22-23179)

* Holmes, Matthew David, Parker, Gary Robert Jr., Heatwole, Eric Mann, Feagin, Trevor Alexander, Broilo, Robert M., Dickson, Peter, Vaughan, Larry Dean, Erickson, Michael Andrew Englert: “Center-Ignited Spherical-Mass Explosion (CISME)”, LANL report (LA-UR-18-29651)

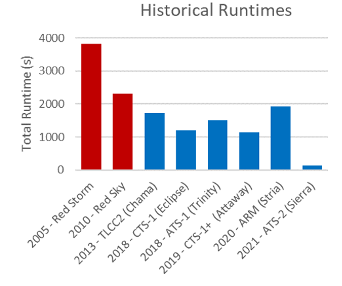

SNL’s W80-4 Structural Dynamics (SD) analysts achieve 10-20x speed-ups with the SIERRA/SD code running on ASC Sierra supercomputer, enabling previously infeasible workflows and new studies.

The W80-4 modeling and simulation (mod-sim) team uses the SIERRA/SD analysis code to simulate responses of the warhead in shock and vibration normal mechanical environments. Due to their complexity these simulations can take more than two weeks to complete. Recent speed-ups achieved in SIERRA/SD, stemming from the “ATS-2 Goal for Weapon Simulation Codes FY21 L2 Milestone,” and the availability of the Trilab graphics processing unit-accelerated Sierra supercomputer at LLNL, provide run times up to 20 times faster than on the Commodity Technology System-1 (CTS-1) platforms (see the bar chart in Figure 12). This reduces the total compute time of the W80-4 models from weeks to overnight. The benefits of these speedups are many, for example an analyst can start a run when they leave work and expect the results back the next day, rather than waiting days or weeks. Also, more models or environmental cases can be explored. This new efficiency has already impacted the W80-4 since analysts now routinely use complex, high-fidelity simulations to explore new areas of interest that were previously infeasible. These improvements enable the mod-sim team to make more informed decisions faster. Faster run times also mean a reduced load on the Trilab compute machines benefitting all users in the nuclear weapons community. (SAND2022-8677 O)

SNL LDRD-funded seashell-inspired shield protects materials in hostile environments.

Word of an extraordinarily inexpensive material, lightweight enough to protect satellites against debris in the cold of outer space, cohesive enough to strengthen the walls of pressurized vessels experiencing average conditions on Earth, and yet heat-resistant enough at 1,500 degrees Celsius (C) or 2,732 degrees Fahrenheit (F) to shield instruments against flying debris, raises the question: what single material could do all this? The answer is very thin layers of confectioners’ sugar from the grocers, burnt to a state called “carbon black,” interspersed between only slightly thicker layers of silica (which is the most common material on Earth), and baked. The result resembles a fine layer cake, or more precisely, the organic and inorganic layering of a seashell, each layer helping the next to contain and mitigate shock.

“A material that can survive a variety of insults — mechanical, shock and X-ray — can be used to withstand harsh environmental conditions,” said SNL’s Guangping Xu, who led development of the new coating. “We believe our layered nanocomposite, mimicking the structure of a seashell, is that answer.” Most significantly, Xu said, “The self-assembled coating is not only lightweight and mechanically strong, but also thermally stable enough to protect instruments in experimental fusion machines against their own generated debris where temperatures may be about 1,500 C.”

The coating, which can be layered on a variety of substrates without environmental problems, was the subject of a SNL patent application in June 2021, an invited talk at a pulsed power conference in December 2021, and again in a recent technical article in MRS Advances, of which Xu is lead author. The work was done in anticipation of the increased shielding that will be needed to protect test objects, diagnostics, and drivers inside the more powerful pulsed power machines of the future. SNL’s pulsed-power Z machine and its successors will certainly require still greater debris protection against forces that could compare to numerous sticks of dynamite exploding at close range. “The new shielding should favorably impact our nuclear survivability mission,” said paper author and SNL Physicist, Chad McCoy.

The inexpensive, environmentally friendly shield is light enough to ride into space as a protective layer on satellites because comparatively little material is needed to achieve the same resilience as heavier but less effective shielding currently in use to protect against collisions with space junk. “Satellites in space get hit constantly by debris moving at a few kilometers per second, the same velocity as debris from Z,” McCoy said. “With this coating, we can make the debris shield thinner, decreasing weight.”

Thicker shield coatings are durable enough to strengthen the walls of pressurized vessels when added ounces are not an issue. According to Guangping, the material cost to fabricate a 2-inch diameter coating of the new protective material, 45 millionths of a meter and microns thick, is only 25 cents. In contrast, a beryllium wafer — the closest match to the thermal and mechanical properties of the new coating, and in use at SNL’s Z machine and other fusion locations as protective shields — costs $700 at recent market prices for a 1-inch square, 23-micron-thick wafer, which is 3,800 times more expensive than the new film of same area and thickness. Both coatings can survive temperatures well above 1,000o C, but a further consideration is that the new coating is environmentally friendly. Only ethanol is added to facilitate the coating process. Beryllium creates toxic conditions and its surroundings must be cleansed of the hazard after its use.

The principle of alternating organic and inorganic layers is key to strengthening the SNL coating. The organic sugar layers burnt to carbon black act like a caulk, said SNL manager and paper author Hongyou Fan. They also stop cracks from spreading through the inorganic silica structure and provide layers of cushioning to increase its mechanical strength.

Seashell-like coatings initially tested at SNL varied between a few to 13 layers. These alternating materials were pressed against each other after being heated in pairs, so their surfaces crosslinked. Tests showed that such interwoven nanocomposite layers of silica with the burnt sugar (carbon black) after pyrolysis, are 80% stronger than silica itself and thermally stable to an estimated 1,650o C. Later sintering efforts showed that layers, self-assembled through a spin-coating process, could be batch-baked and their individual surfaces still crosslinked satisfactorily, removing the tediousness of baking each layer. The more efficient process achieved very nearly the same mechanical strength. (SAND2022-5811E, see the latest LDRD/SDRD Quarterly Highlights Newsletter for additional information)

LDRD helps pave the way to tailor-made carbon nanomaterials and more accurate energetic materials modeling at LLNL.

Carbon exhibits a remarkable tendency to form nanomaterials with unusual physical and chemical properties, arising from its ability to engage in different bonding states. Many of these “next-generation” nanomaterials, which include nanodiamonds, nanographite, amorphous nanocarbon, and nano-onions, are currently being studied for possible applications spanning quantum computing to bio-imaging. Ongoing research suggests that high-pressure synthesis using carbon-rich organic precursors could lead to the discovery and possibly the tailored design of many more.

To better understand how carbon nanomaterials could be tailor-made and how their formation impacts shock phenomena such as detonation, LLNL scientists conducted ML-driven atomistic simulations to provide insight into the fundamental processes controlling the formation of nanocarbon materials, which could serve as a design tool, help guide experimental efforts, and enable more accurate energetic materials modeling.

Recent experiments on nanodiamond production from hydrocarbons subjected to conditions similar with those of planetary interiors offer some clues on possible carbon condensation mechanisms, but the landscape of systems and conditions under which intense compression could yield interesting nanomaterials is too vast to be explored using experiments alone.

In their analysis, the LLNL team found that liquid nanocarbon formation follows classical growth kinetics driven by Ostwald ripening (growth of large clusters at the expense of shrinking small ones) and obeys dynamical scaling in a process mediated by reactive carbon transport in the surrounding fluid. “The results provide direct insight into carbon condensation in a representative system and pave the way for its exploration in higher complexity organic materials, including explosives,” said LLNL researcher Rebecca Lindsey, co-lead author of the corresponding paper appearing in Nature Communications.

The team's modeling effort, comprised of an in-depth investigation of carbon condensation (precipitation) in oxygen-deficient carbon oxide (C/O) mixtures at high pressures and temperatures, was made possible by large-scale simulations using machine-learned interatomic potentials.

Carbon condensation in organic systems subject to high temperatures and pressures is a non-equilibrium process akin to phase separation in mixtures quenched from a homogenous phase into a two-phase region, yet this connection has only been partially explored; notably, phase separation concepts remain very relevant for nanoparticle synthesis.

The team's simulations of chemistry-coupled carbon condensation and accompanying analysis address longstanding questions related to high-pressure nanocarbon synthesis in organic systems. “Our simulations have yielded a comprehensive picture of carbon cluster evolution in carbon-rich systems at extreme conditions — which is surprisingly similar with canonical phase separation in fluid mixtures — but also exhibit unique features typical of reactive systems,” said LLNL Physicist Sorin Bastea, principal investigator of the project and a co-lead author of the paper.

“Directly simulating ion transport across heterogenous interfaces remains technically challenging,” said LLNL materials scientist Stephen W. Weitzner, lead author of the paper. “In many instances, we need to rapidly and accurately compare the effects of different environmental conditions on ion transport processes, but current simulation strategies can be too resource-intensive for high-throughput studies. So, we developed a set of dynamic metrics that provide a fast and intuitive way to compare the relevant chemical features. Our analysis showed that the new set of dynamic metrics not only correctly encoded key physical behavior, but also could explain trends in ion behavior that were challenging to classify using conventional static descriptors.”

Weitzner added that beyond aiding the description and discussion of ion transport kinetics, the metrics provide useful targets for the development of ML-based force fields that could dramatically accelerate future simulations of metal ion dissolution and transport rates without loss of accuracy. More information is available in LLNL's news article from March 2022. (LLNL-WEB-458451)

LDRD Grand Challenge Project could transform electronics, solve energy challenges.

Can certain specialized fabrication techniques impact fields outside of quantum information science? As soon as SNL physicist, Shashank Misra, started to unpack this question, he discovered a monster of a problem that was difficult to solve with a conventional research project. Shashank decided to pursue his idea of taking qubit fabrication methods originally developed by researchers in Australia (later adopted by SNL) for quantum computing and use these methods to make microelectronics.

The payoff could be huge — a fundamentally new way to build integrated circuits, power electronics, and other semiconductor devices. New quantum microelectronics could potentially be as transformative as quantum computers and quantum sensors. They could be a key to solving looming energy challenges.

The toolkit Shashank wanted to use is called atomic precision advanced manufacturing, which he and others simply call “APAM.” It’s a collective term for technologies that let researchers control where individual atoms go in a device. “The place where we ran into it headlong was when we would pitch application ideas where having atomic-scale control was really important. The push back was there were too many open science questions that could prevent having a significant impact… up to that time nobody had shown that devices could work outside of a cryostat,” a machine that maintains ultra-low temperatures, Shashank said. Shashank decided to tackle some of the open science questions by pulling together a cross-disciplinary team — an approach perfectly suited for a Grand Challenge LDRD.

The FAIR DEAL Grand Challenge, short for Far-reaching Application, Implication and Realization of Digital Electronics at the Atomic Limit, ran from FY19 through FY21. With a team of 36 people over three years, Shashank aimed to answer three main questions: (1) Can SNL-developed atomic precision advanced manufacturing build a device that is compatible with the complementary metal-oxide-semiconductor (CMOS) technology, the industry-standard method of semiconductor manufacturing? (2) Can it be accomplished in a practical environment (i.e., not at cryogenic temperatures)? (3) Does the final product do anything special that a conventional device can’t? Shashank never expected the answers would be yes, yes, and yes. The FAIR DEAL team successfully built a microchip in which an APAM nanodevice worked directly in concert with a CMOS circuit built at the Microsystems Engineering, Science and Applications Complex. Not only did the combined circuit work as planned, and at room temperature, but the team also demonstrated that APAM devices shouldn’t compromise the robustness of the microchip.

In 2021, a spin-off project began with DOE’s Advanced Manufacturing Office, aimed at developing what FAIR DEAL began for energy-efficient microelectronics. SNL has also begun several collaborations with university partners, and the team is in talks with potential sponsors about whether this new way of processing silicon could create opportunities to implement new kinds of hardware trust and security measures.

Shashank says that his team’s success has opened even more scientific questions that need to be studied. “We have atomic-scale control over some elements in silicon,” he said. “And I think the science opportunities that other people are pursuing on the project at this point are looking at whether we can have atomic-scale control in every material. There’s a lot of thought that has to go into how you do that, but I do think that’s where the science is headed.” (SAND2022-1967L)

Questions? Comments? Contact Us.

NA-114 Office Director: Thuc Hoang, 202-586-7050

- Integrated Codes: Jim Peltz, 202-586-7564

- Physics and Engineering Models/LDRD: Anthony Lewis, 202-287-6367

- Verification and Validation: David Etim, 202-586-8081

- Computational Systems and Software Environment: Si Hammond, 202-586-5748

- Facility Operations and User Support: K. Mike Lang, 301-903-0240

- Advanced Technology Development and Mitigation: Thuc Hoang